|

|

|

|

|

|

|

|

|

|

IEEE Robotics and Automation Letter (RA-L) 2022. (Oral Presentation) |

|

Abstract

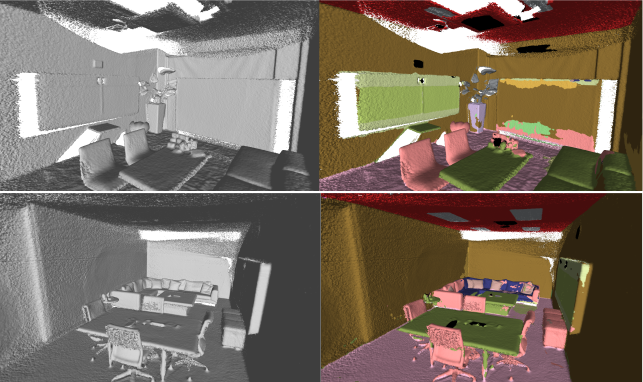

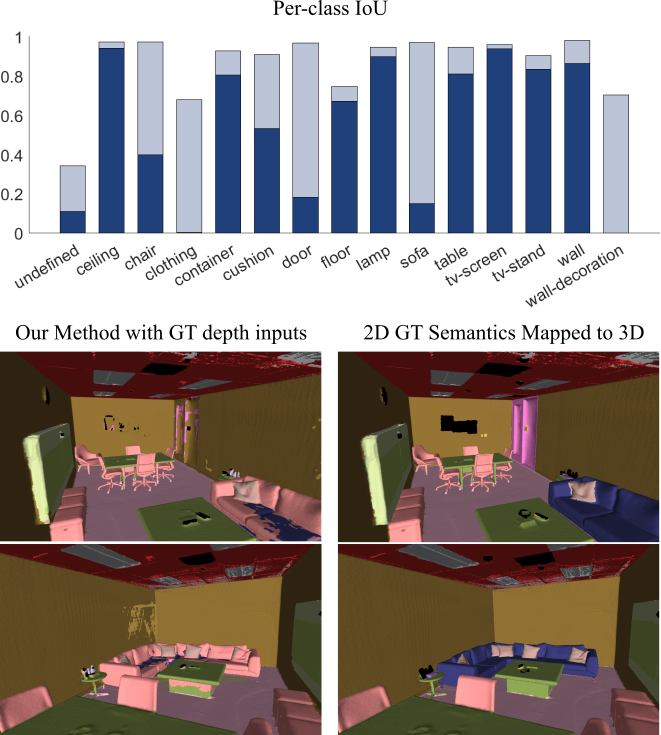

This paper presents a real-time online vision framework to jointly recover an indoor scene's 3D structure and semantic label.

Given noisy depth maps, a camera trajectory, and 2D semantic labels at train time, the proposed deep neural network based approach

learns to fuse the depth over frames with suitable semantic labels in the scene space. Our approach exploits the joint volumetric

representation of the depth and semantics in the scene feature space to solve this task. For a compelling online fusion of the semantic

labels and geometry in real-time, we introduce an efficient vortex pooling block while dropping the use of routing network in online depth

fusion to preserve high-frequency surface details. We show that the context information provided by the semantics of the scene helps the

depth fusion network learn noise-resistant features. Not only that, it helps overcome the shortcomings of the current online depth fusion

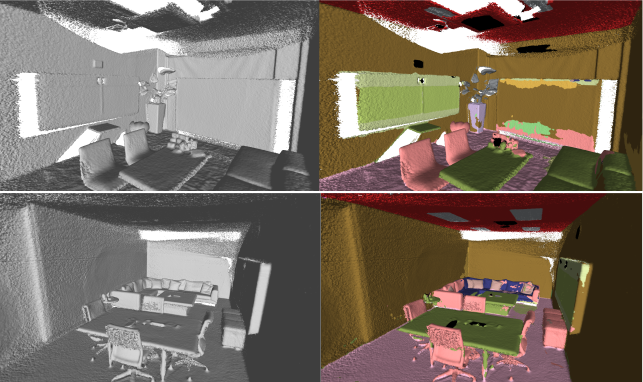

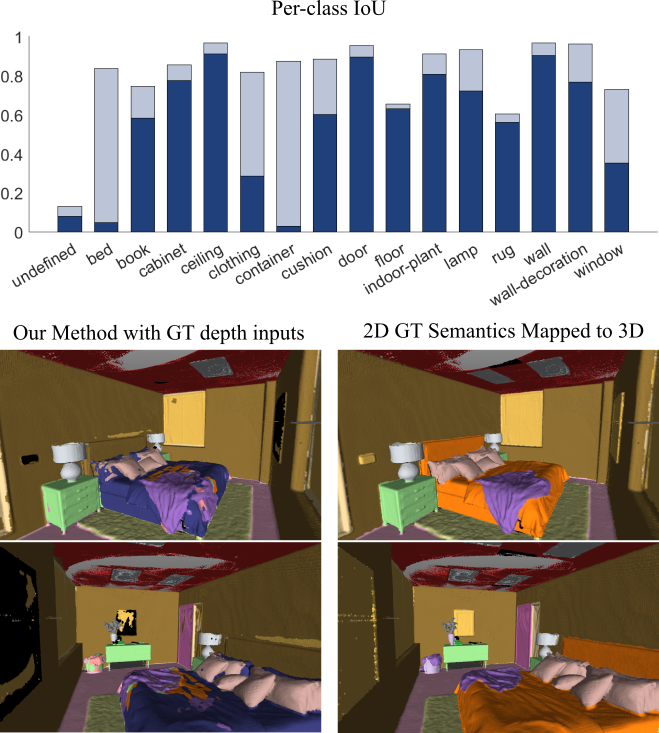

method in dealing with thin object structures, thickening artifacts, and false surfaces. Experimental evaluation on the Replica dataset

shows that our approach can perform depth fusion at 37 and 10 frames per second with an average reconstruction F-score of 88% and 91%, respectively,

depending on the depth map resolution. Moreover, our model shows an average IoU score of 0.515 on the ScanNet 3D semantic benchmark leaderboard.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

This work was funded by Focused Research Award from Google(CVL, ETH, 2019-HE-323). Suryansh Kumar's project is supported by "ETH Zurich Foundation and Google, Project Number: 2019-HE-323, 2020-HS-411" for bringing together best academic and industrial research. The authors thank Mr. Silvan Weder (CVG, ETH) for useful discussion. |